Practical guidance on AI risk decisioning, financial compliance, and machine learning in regulated environments. Written by the Prism Layer team.

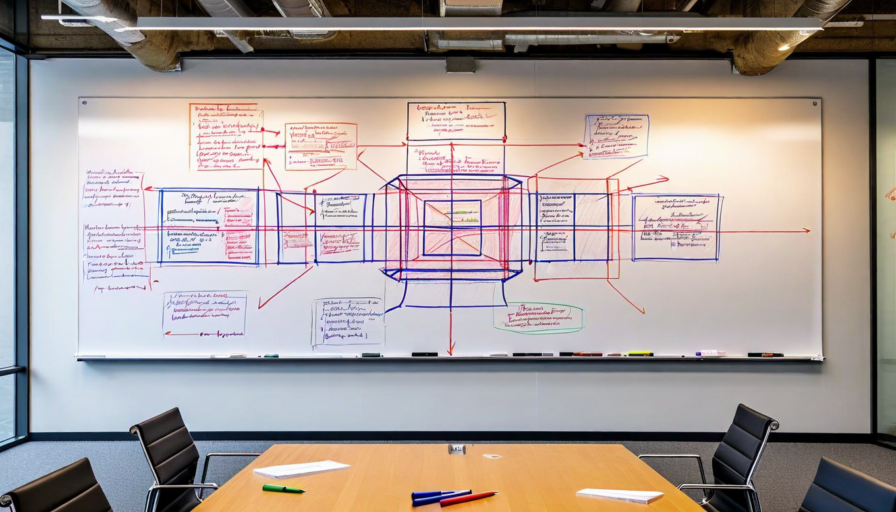

The gap between AI hype and operational reality in lending has never been wider. Here is what the shift actually requires.

Read More

Regulators are no longer satisfied with black-box credit models. Here is what explainable AI looks like in practice.

Read More

Volume spikes, adversarial patterns, and regulatory constraints all hit at once. Here is how to build a system that survives them.

Read More

Performance degradation in production models is inevitable. The question is whether you see it first or your regulators do.

Read More

Bolting compliance on after the fact is expensive and brittle. Here is how to design it in from the start.

Read More

Not every claim needs an adjuster. AI triage changes the economics of claims handling when deployed correctly.

Read More

Point solutions create integration debt that compounds. Here is why API-first architecture wins in the long run.

Read More

When a regulator asks why your model made a specific decision, you need more than a confidence score. You need a trail.

Read More

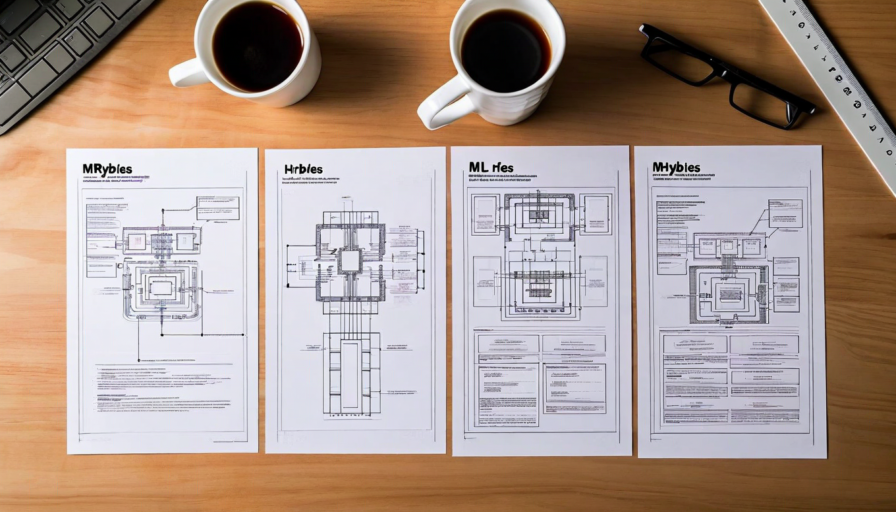

Ensemble methods improve accuracy, but they make explainability harder. Here is how to have both.

Read More

Fair lending violations in AI systems often appear only at scale. Testing for them must happen before you get there.

Read More

Each architecture has its place. Here is how to choose the right one based on your operational context and regulatory exposure.

Read More

The founding story of Prism Layer — where the idea came from and why we believed the problem was worth solving.

Read More