The academic case for ensemble methods in machine learning is well established. Combining multiple model outputs consistently outperforms any single model on most prediction tasks, including financial risk scoring. The accuracy gains from blending a gradient boosting model, a neural network, and a traditional scorecard are real and measurable.

The practical case in regulated financial services is more complicated. Ensemble methods create an accountability problem that single models do not. When a decision is produced by a blend of three models, each with its own feature set and its own internal logic, the question "why did the model make this decision" becomes significantly harder to answer. And in credit, fraud, and insurance, that question is not optional. Regulators ask it. Consumers have the legal right to ask it. And when you cannot answer it cleanly, you have a compliance exposure that compounds with every automated decision you make.

The goal — maintaining ensemble accuracy while preserving accountability — is achievable. But it requires deliberate architecture, not just model selection.

The Explainability Challenge in Ensembles

A single logistic regression scorecard is straightforward to explain. Each input feature has a coefficient. The contribution of each feature to the final score is directly calculable. Adverse action reason codes map cleanly to the features with the largest negative contributions.

A gradient boosting model is harder to explain. The feature importance scores are informative at a population level but not precisely accurate for individual decisions. SHAP values solve part of this problem by providing decision-level feature attribution, but they are computationally expensive and do not always produce the intuitive explanations that regulators and consumers expect.

A neural network is harder still. Feature attribution methods like SHAP and LIME produce approximate explanations that are useful for analysis but cannot be certified as accurate representations of internal model logic.

An ensemble of all three compounds these challenges. The weighted combination of three models with different feature sets and different explainability properties produces a score that is accurate but difficult to decompose into a clean explanation. The reason codes for the ensemble are not straightforwardly derivable from the reason codes of the component models.

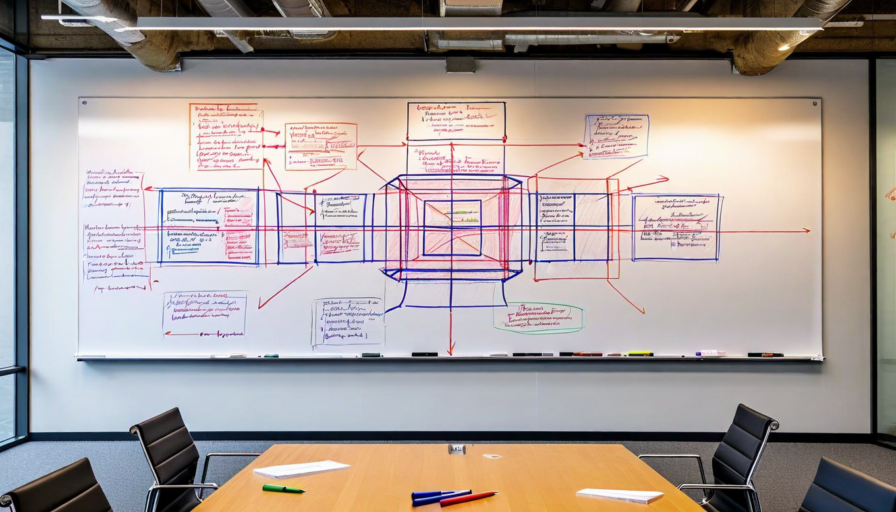

The Architecture That Resolves the Tension

The resolution is to separate the ensemble scoring layer from the explanation layer, and to design both layers deliberately rather than deriving the explanation from the ensemble after the fact.

The ensemble produces a highly accurate composite risk score. That score drives the decision. But the reason codes that accompany the decision are not derived by decomposing the ensemble. They are produced by a separate explanation model that is trained specifically for explainability, using the final decision as its target. The explanation model is interpretable by design — typically a constrained regression that operates on a curated set of features selected for their regulatory appropriateness and their human interpretability.

This architecture produces reason codes that are accurate to the decision, legally defensible, and expressed in terms that make sense to the consumer. The ensemble provides accuracy. The explanation layer provides accountability. Neither compromises the other.

Governance Considerations for Multi-Model Systems

Multi-model systems create governance complexity that single-model systems do not. Each component model needs its own validation, its own performance monitoring, and its own update cycle. The ensemble weighting itself is a parameter that requires documentation and governance. And the interaction between component models — how disagreements between models are resolved, how uncertainty is propagated through the ensemble — needs to be specified and validated.

Model versioning in a multi-model system also requires careful design. If a component model is retrained or replaced, the behavior of the ensemble changes even if the other components remain constant. The audit trail needs to identify the specific version of each component model that contributed to every decision, not just the ensemble version.

What This Means in Production

The multi-model risk scoring approach Prism Layer uses reflects these architectural requirements. The platform maintains up to 12 integrated model outputs in a weighted ensemble, with each component model independently versioned and monitored. The explanation layer runs alongside the ensemble and produces reason codes that are validated against actual adverse action requirements before deployment.

The result is a system where accuracy and accountability are not in tension. The ensemble produces better decisions than any single model could. The explanation layer makes those decisions defensible. And the governance infrastructure ensures that both layers are operating correctly, continuously, and in documented alignment with regulatory requirements.

That combination — better decisions and better documentation — is what multi-model risk scoring looks like when it is done correctly. The accuracy gains are real. The accountability does not have to be sacrificed to get them.